Intro to Reality Pentesting

A Conceptual Field Topology for Proactive Cognitive Defense

A framework for mapping and red-teaming the human cognitive attack surface.

The Problem

For years, the security industry has known that the easiest way to compromise a system is to hack the human operating it. Yet as a field, we remained convinced we could harden technical defenses to such a degree that the human element would become irrelevant.

Needless to say, that bet hasn’t paid off.

Adversaries have noticed the same asymmetry we have, and have changed tactics in response. Criminal scam gangs have consolidated into full-scale organizations, complete with HR departments, R&D divisions, and psychologists who manage staff morale when the cognitive dissonance of doing bad things to good people gets too loud. AI augments their industrialized efforts to better attack vulnerable people in ways that were unthinkable five years ago, but in truth, these same capabilities are now accessible to anyone with an API key and motivation.

The near-term horizon of this trend is precision cognitive targeting: simulating millions of attacks against your AI-powered digital twin before running the most optimized version against you.

We have approximately five billion connected minds on this planet, and most are running unpatched cognitive firmware with no CVE database, patch cycle, or incident response protocol. The cyber industry has spent decades hardening the technical OSI stack, but the human is still rarely resourced the same way. Our frameworks, certifications, and standards reflect the threats we’ve prioritized and readily understood, not necessarily the most exploitable.

Originally, my intention for exploring the concept of “Reality Pentesting” was to create a framework for how we might run adversarial-minded security tests against human perception. At the time of this write-up, I have mapped the topological attack surface, correlated security testing domains with cognitive analogs, and identified several issues we must resolve before deploying such a framework. I am continuing my research, conceptualization, and collaboration in earnest. What follows here is a summary of the field topology of human cognition: a five-layer model of the human cognitive field, its attack surfaces, and a proposal for what proactive defense might look like.

Bezmenov’s Long Game & the DDoS’d Infosphere

Before we map the attack surface, it’s worth understanding the strategic context.

Yuri Bezmenov was a KGB propagandist who defected to North America in the 1970s. His warning was stark: the United States was already fighting a war it didn’t know it was in. While Americans obsessed over Soviet-flavored spy thrillers, the KGB was in fact allocating 85% of its resources to long-term psychological warfare called “ideological subversion” and “demoralization.”

The goal was to erode democratic society’s ability to agree on what is real. The plan required decades of patient effort: just slowly, systematically degrading the population’s capacity to process information and reach sensible conclusions. The scale came from the length of time estimated to compromise the thinking of at least one generation.

A demoralized population, Bezmenov explained, can be shown true information and still reject it. Facts stop mattering once the epistemology is broken.

It’s alarming how accurately his description of a demoralized society from the early 1980s maps to our informational reality of the mid 2020s.

What once required decades of state-sponsored coordination now runs on automated systems accessible to anyone with an API key. The infosphere in return has been effectively DDoS’d: synthetic content is cheap, bot networks amplify at scale, and the sheer quantity of conflicting information has flooded us well past our cognitive processing limits. Social proof signals—the heuristics we’ve relied on for millennia to determine what’s trustworthy—have been completely disrupted by the proliferation of bots and synthetic personas.

The result is heuristic infrastructure compromised at the source, with a good portion of those five billion connected minds virtually unaware the vulnerability even exists.

The Cognitive Field Topology

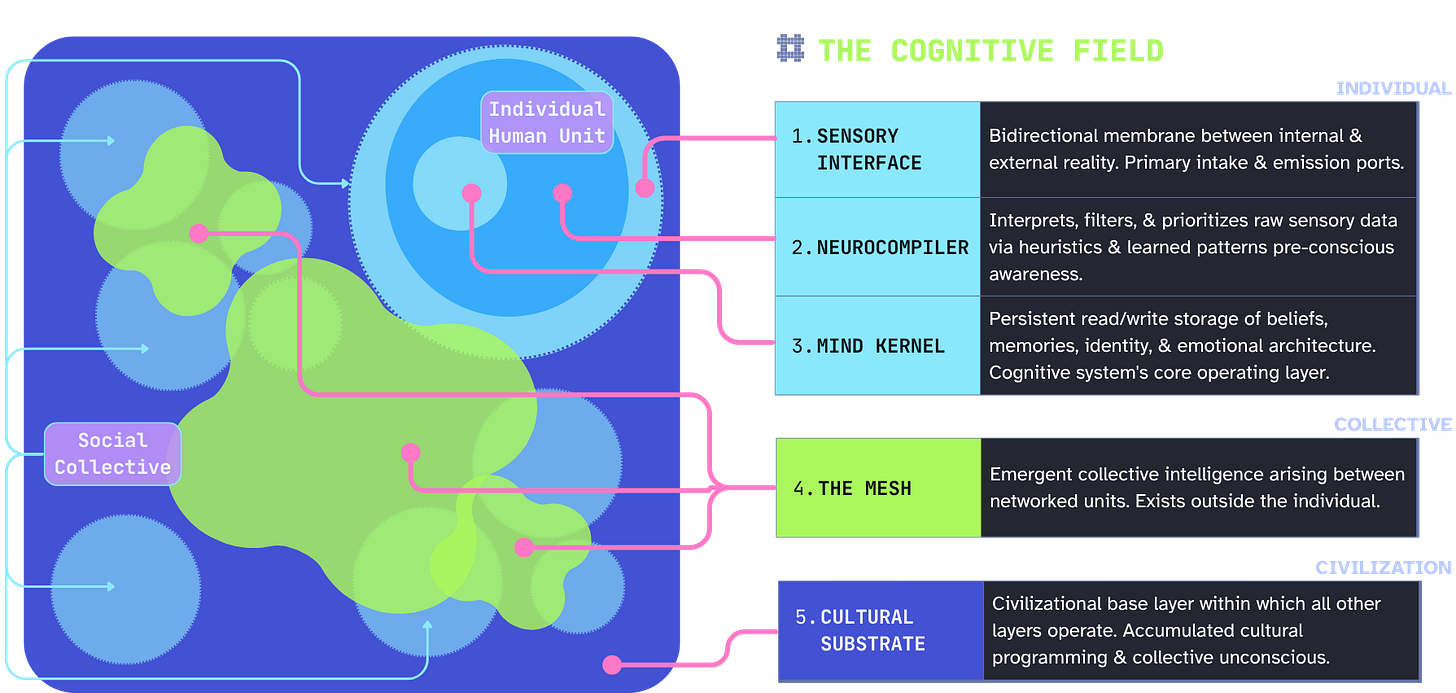

The model I’m proposing organizes human cognitive infrastructure into five layers across three scales: individual, collective, and civilizational.

The first three concentric layers make up an individual human unit:

Sensory Interface — Bidirectional membrane between internal and external reality.

NeuroCompiler — Interprets, filters, and prioritizes raw sensory data via heuristic processing prior to conscious awareness.

Mind Kernel — Persistent read/write storage of beliefs, memories, identity, & emotional architecture (ontological scaffolding). Cognitive system’s base operating layer.

(For a thorough-but-accessible breakdown of the neuroscience behind attention and perception, I highly recommend checking out Winn Schwartau’s The Art & Science of Metawar.)

Beyond the individual, we cross into the collective, or what I’m calling the Mesh. This is the emergent collective intelligence arising between networked individuals. The final layer is called the Cultural Substrate, and is the civilizational base layer for macro-society and the collective unconscious.

The terminology I’ve used is deliberate: an interface needs a compiler, which feeds into a kernel at the root access level. Kernels can also connect to one another and form a mesh. The mesh and kernels run on a substrate, but are unable to affect change to it individually.

Additionally, each layer inherits from and feeds back into the others, meaning compromise at any level can quickly cascade through the entire stack. Think of it as OSI Layer 8 (the theoretical “Human” layer) and beyond.

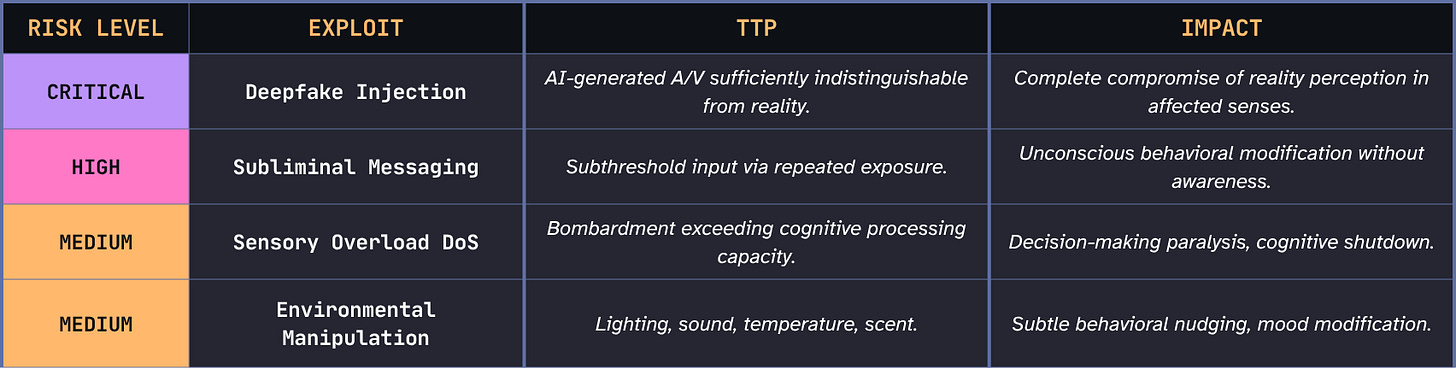

Layer 1: Sensory Interface

Reality’s I/O Ports

Scale: Individual

Function: Bidirectional membrane between internal and external reality.

The Sensory Interface is where reality and perception first make contact. Everything the cognitive system will eventually believe, decide, or act on enters here first. This makes it both the most accessible and the most implicitly trusted layer in the topology.

The key architectural insight is this layer’s bidirectionality. We generally think of the senses as receivers, but everything we emit—speech, facial expression, movement, behavioral patterns—exits through this same interface to become another person’s sensory input.

What we put out can be captured, analyzed, profiled, and used to craft precisely targeted inputs back at our own interface. In cyber, we’ve started calling this Personally Identifiable Behavior (PIB) at the behest of industry visionary Winn Schwartau. Voice cloning works because your voice is a sensory output that’s been harvested. Deepfakes work because your face and mannerisms are sensory outputs that have been modeled. Thus, the attack surface includes what you emit, not just what you receive.

This layer is exploitable because it carries an implicit trust assumption we rarely examine. We trust our senses by default... we have to! There’s no practical alternative. You cannot verify every sensory input in real time before allowing it to proceed into processing. At present, our cognitive architecture isn’t built for a world where realistic synthetic stimuli are cheap and sufficiently indistinguishable from organic ones.

Exploit Examples

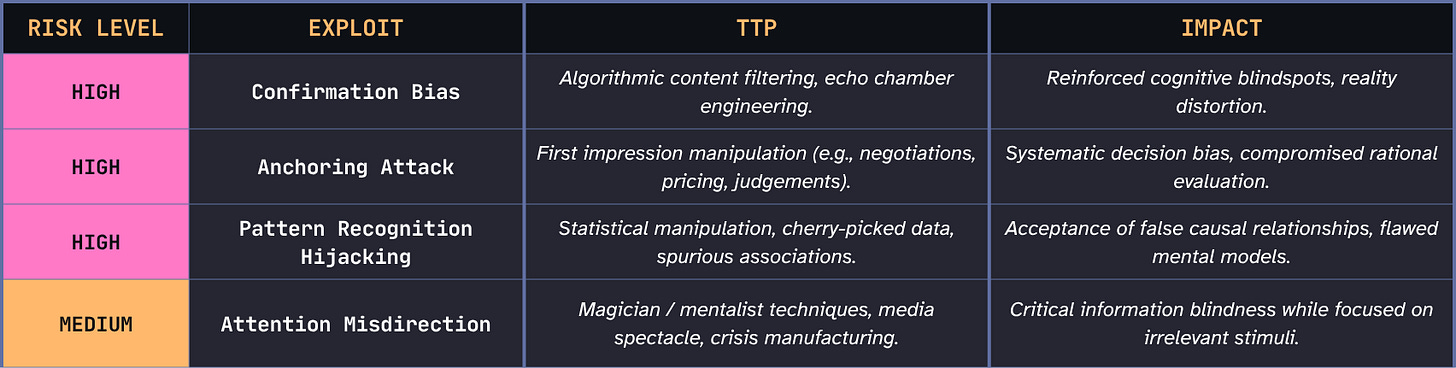

Layer 2: NeuroCompiler

The “Good Enough” Processing Engine

Scale: Individual

Function: Interprets, filters, and prioritizes raw sensory data via heuristic processing prior to conscious awareness.

The NeuroCompiler is where raw sensory data gets interpreted before you’re consciously aware of it. It decides what things mean, and it does this fast, automatic, and mostly invisible. It’s also where the majority of cognitive exploits actually land, right in this sweet spot between perception and conscious thought.

This is my term for what Daniel Kahneman called System 1 thinking. If the Sensory Interface is the intake port, the NeuroCompiler is what turns that input into “filtered meaning” before the Mind Kernel ever sees it. It takes raw signal (e.g., photons, sound waves, chemical gradients, pressure) and translates it into something actionable based on binary categories like threat or safe, familiar or novel, trustworthy or suspicious.

The speed is both an evolutionary feature and a modern bug. Processing here is fast enough to get you out of the way of a thrown object before you’ve consciously registered it. But “good enough most of the time” means “predictably wrong some of the time.” These systematic errors are fully documented, consistent across populations, and exploitable by anyone who studies them. The cognitive bias is, from an adversarial perspective, a targeting manual.

Magicians and mentalists have understood this for centuries without the academic vocabulary. Their entire discipline is built on exploiting how pre-conscious processing works. Penn & Teller’s insight on stagecraft — that you can predict with near-perfect accuracy where attention will go if you give it a small nudge — is an astute cognitive security insight.

A critical architectural feature: the NeuroCompiler can route its output directly back to the Sensory Interface and out as behavior, skipping the conscious awareness of the Mind Kernel entirely. Reflex and startle responses use this mechanism, making this bypass pathway enormously useful for survival. Yet it leaves a wide-open backdoor. If the layer that holds access to skepticism and deliberate evaluation can be bypassed completely, a host of exploits become possible that would otherwise fail.

Exploit Examples

Layer 3: Mind Kernel

The Kernel of Consciousness

Scale: Individual

Function: Persistent read/write storage of beliefs, memories, identity, & emotional architecture. The cognitive system’s core operating layer.

The Mind Kernel is where the cognitive system stores what it believes is true about itself and the world. It’s the most consequential layer for long-term security because deep compromise here can fundamentally change who someone IS.

If the NeuroCompiler is the processor and translator, the Mind Kernel is both persistent storage and operating system core. Beliefs, memories, and emotional architecture consolidate here to create the scaffolding of one’s identity in relation to the world. In this way, the Mind Kernel shapes the lens through which all future data gets interpreted.

In an operating system, kernel-level access means you can modify the rules by which all programs run. Mind Kernel compromise works the same way by changing the belief-formation process itself, not just individual beliefs.

Further, a compromised Mind Kernel will actively recruit new information into the service of maintaining the integrity of the compromise. Even accurate information gets processed through a deformed lens and can reinforce the compromise rather than correcting it.

This is exaclty what Bezmenov described as demoralization. A person with a compromised core can be shown true information and still reject it. The facts stop mattering once the epistemology is broken.

A kernel panic in an operating system halts the system completely rather than continuing in a corrupted state. Humans don’t have that protection. We continue operating through kernel-level compromise, making decisions and forming relationships and influencing others, with no blue screen or audit trail.

Remediation at this layer is not simply a matter of training new heuristics. It requires rebuilding ontology; the reconstruction of one’s understanding of what’s real using the very cognitive architecture that was compromised. This is closer to what trauma-informed therapists do than anything in the current cybersecurity toolkit.

Exploit Examples

Layer 4: The Mesh

Emergent Collective Intelligence

Scale: Collective

Function: Emergent intelligence arising between networked minds, controlled by no single node (decentralized).

Everything up to this point has been inside an individual system. The Mesh is the first layer that exists between multiple individuals.

When networked individual units synchronize around shared purposes, beliefs, or narratives with enough intensity and duration, something emerges that no single unit controls: the Mesh. The Mesh develops its own momentum, powerful enough to shape behaviors and decision-making in ways no individual person consciously directs. The group starts running on the Mesh’s programming (allowing it to inform their behavior) rather than the other way around.

There’s an older, more accurate term for this phenomenon: egregore. This is a concept from collective philosophy which describes a thought-form that arises from, and then governs, the group that generated it.

Although it may sound esoteric, this is a naturally occurring phenomenon. Every organization, community, and culture generates a Mesh. Substack has one. Our industry, hacker community, generation, neighborhood, family all have their own Mesh. The Mesh is merely a shared set of assumptions and values that shapes what gets said and done; it’s a structural feature of any sustained collective.

The attack surface this creates is specific. You can’t directly access or modify the Mesh from inside it. Individual contributions enter through sensory output—what is spoken, written, created, done—and aggregate with others’ contributing outputs. No individual controls the aggregate. It operates according to the dynamics of social contagion, network effects, conformity pressure, and narrative momentum.

Consider this thought experiment: an adversary wants to shift the culture of a target organization over 18 months with no direct access. They identify the five most socially influential employees; not the most senior, but the most connected and trusted. They shape the information diet of those five people. Gradually, what those five say and model begins to shift. The Mesh absorbs those outputs, and slowly, the overall culture will follow.

Total direct exposure? Five people. Total impact? Potentially an entire organization’s culture. Attribution? Effectively impossible!

This is why the Mesh layer is so dangerous in our world of agentic AI. A sufficiently capable system can map a community’s social network, identify the highest-influence nodes, and calculate the minimum intervention required to steer the collective toward a desired state. The adversarial capability here has scaled much faster than our understanding of what’s happening.

Exploit Examples

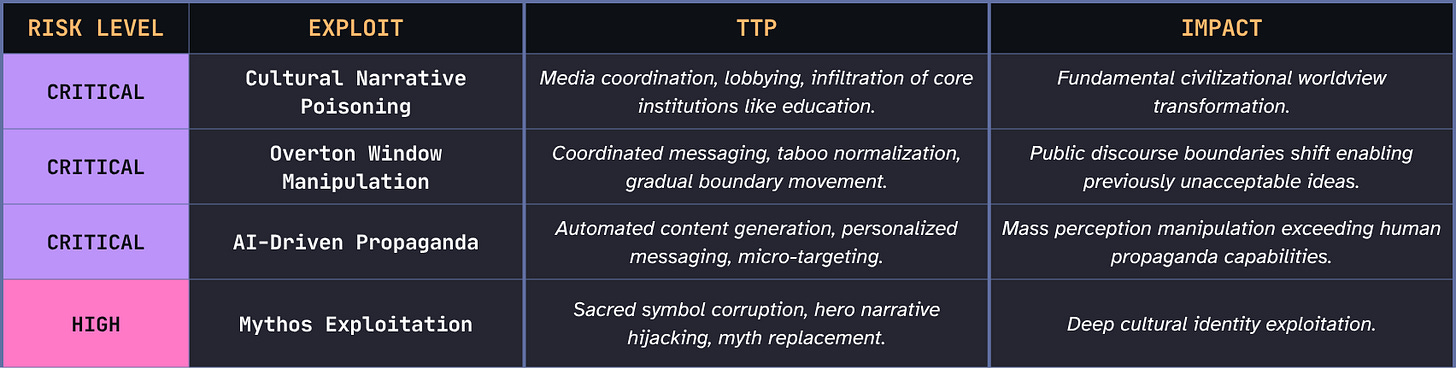

Layer 5: Cultural Substrate

Reality’s Base Layer

Scale: Civilization

Function: Civilizational base layer encoding beliefs, myths, instincts, and the collective unconscious within which all other layers operate.

The Cultural Substrate is the hardest layer to talk about because it’s the medium we’re all already inside. It’s the accumulated substrate of shared myths, archetypes, and collective assumptions so deep they feel more like facts than constructions.

In computing, the substrate is the foundational material on which all circuits are fabricated. Nothing runs without it, and individual components can’t meaningfully alter it from within. That’s what this layer is to civilization: the base material everything else is built on.

Where the Mesh emerges and sometimes dissipates within a human lifetime, the Cultural Substrate changes so slowly that no individual life can perceive the change from inside it. Think of it as the operating mythos of a civilization, the implicit assumptions that organize everything rather than explicit beliefs people articulate. Assumptions about human nature, about what constitutes legitimate authority, about what’s sacred or negotiable…

Attacks at this layer are distinguished by three properties:

Attribution is almost impossible.

You can only see the slow accumulation of small shifts that collectively transform shared reality.The attack surface is load-bearing infrastructure.

Cultural narratives are the ground on which individual identity, collective coherence, and institutional legitimacy all rest. Attacking them degrades the shared framework within which all information can be evaluated at all.Remediation operates on generational timescales.

Individual cognitive resilience is largely irrelevant at this layer.

If Bezmenov’s long game is bearing fruit, this is where we’d look for signs of the harvest.

Exploit Examples

So What Is Reality Pentesting?

Reality Pentesting proposes transferring methodology from security testing to the cognitive domain by simulating attacks against human perception and decision-making to proactively identify vulnerabilities before adversaries exploit them.

In practice, this might look like scoping a target’s cognitive profile, mapping their primary information inputs across the topology layers, simulating attack chains, scoring the vulnerabilities found, and reporting with guidance for remediation. This follows the same basic engagement structure you’d recognize from any penetration test (reconnaissance, exploitation, reporting, etc.) but targeting cognition rather than tech.

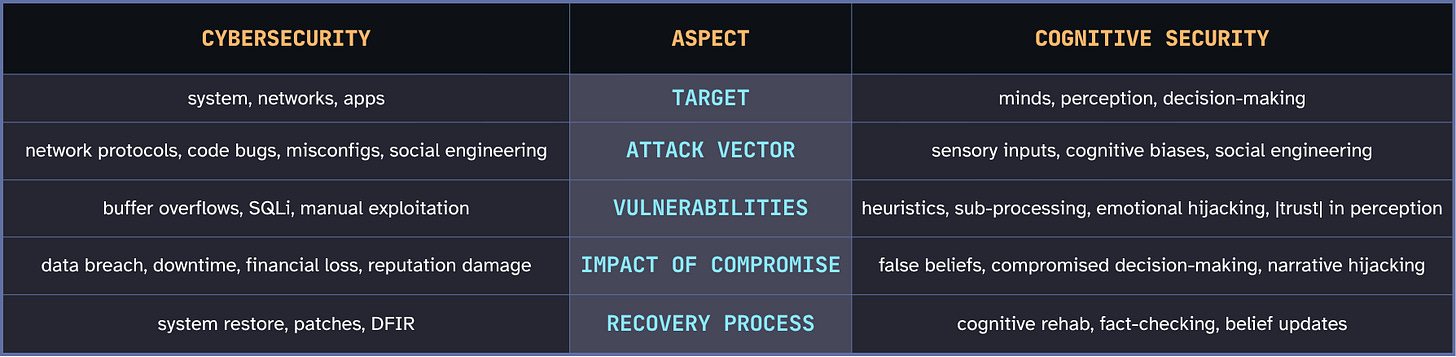

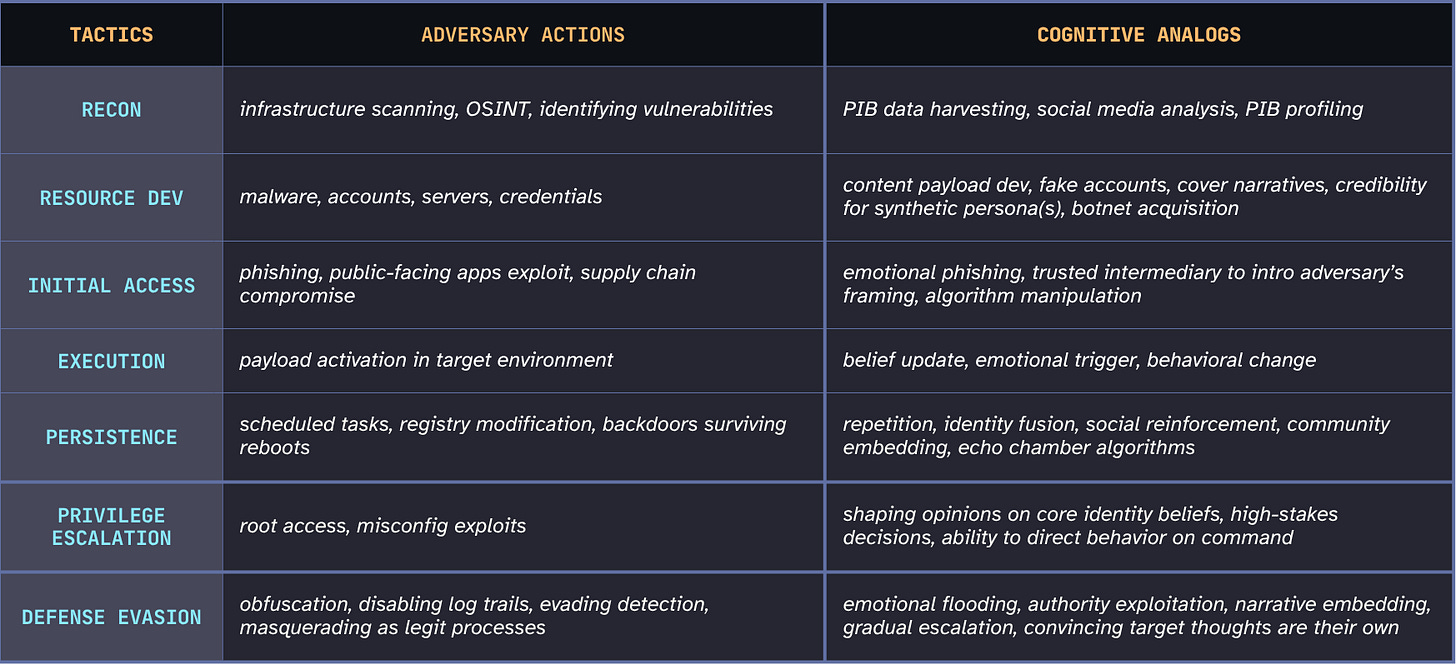

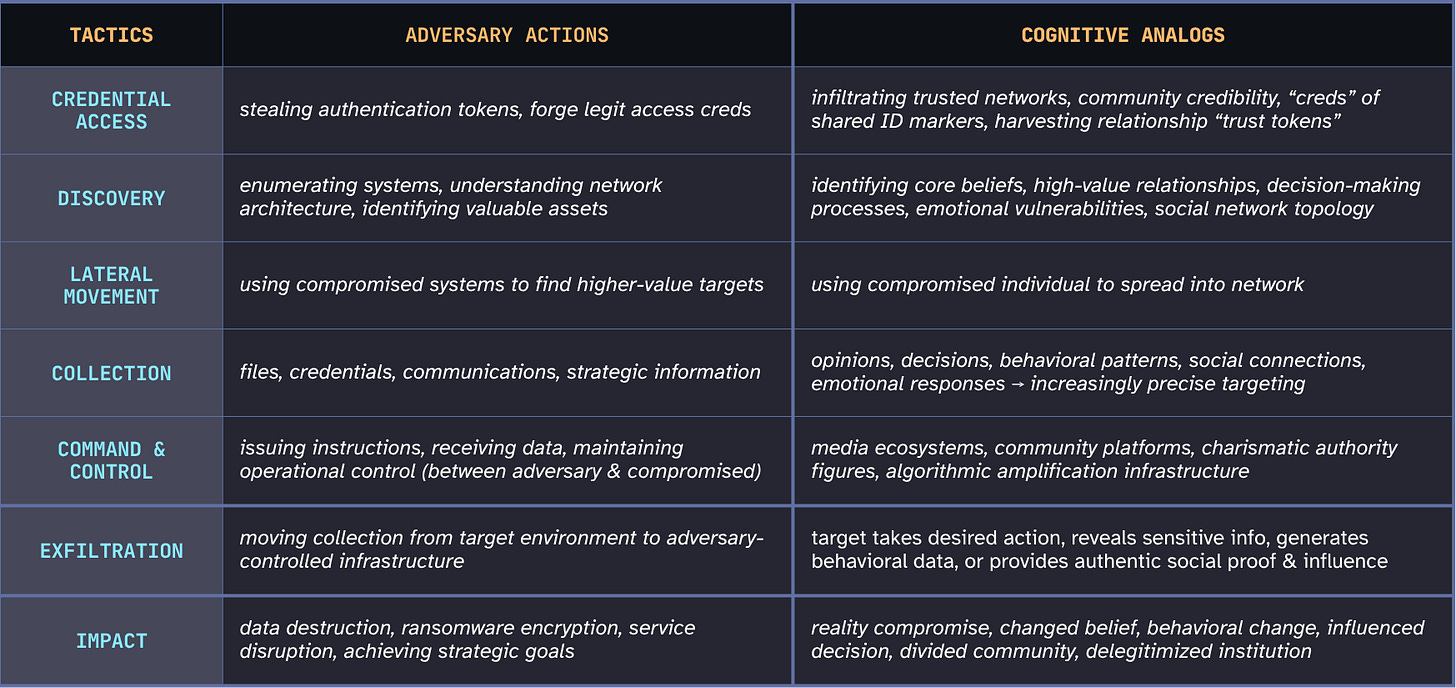

Cognitive Analogs from MITRE ATT&CK Framework

Isn’t Cognitive Security Just Social Engineering?

The distinction between this and social engineering matters. Social engineering is a payload. It’s about convincing someone a specific lie is true. On the other hand, cognitive attacks are the delivery mechanism. They target how the system processes information, not just what information gets processed.

"Where social engineering represents the payload, cognitive attacks are the delivery mechanism — exploiting how the system thinks, not just what it thinks." (Canham & Sawyer, 2026)

A phishing email is social engineering. The trust architecture that makes you believe authority figures without verification is the cognitive attack vector that the phishing email exploits. Defending against social engineering means training people to spot lies. Defending against cognitive attacks means understanding and hardening the processing architecture that makes lies effective in the first place.

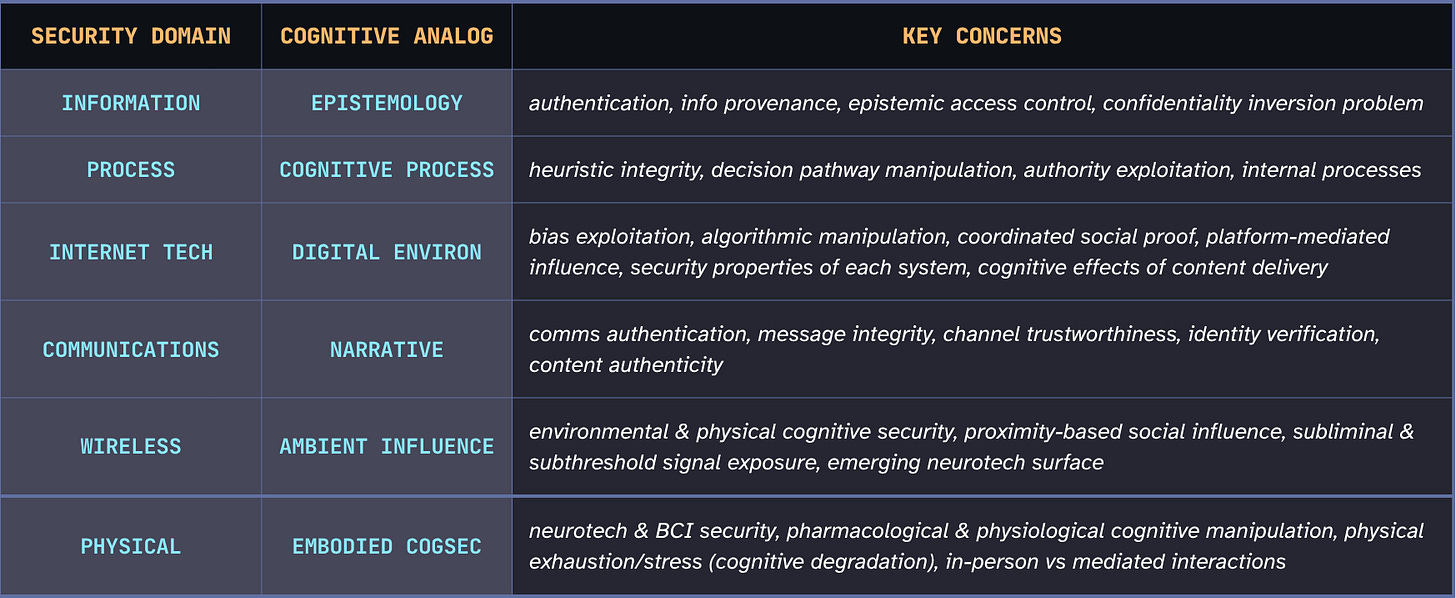

Cognitive Analogs from OSSTMM Security Domains

“This manual is a professional standard for security testing in any environment from the outside to the inside. As a professional standard, it includes the rules of engagement, the ethics for the professional tester, the legalities of security testing, and a comprehensive set of the tests themselves.” — The OSSTMM 2.1

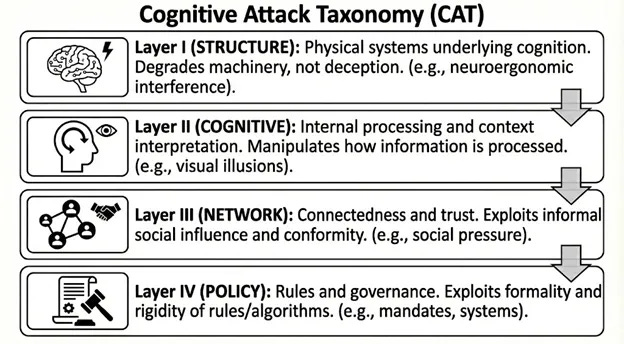

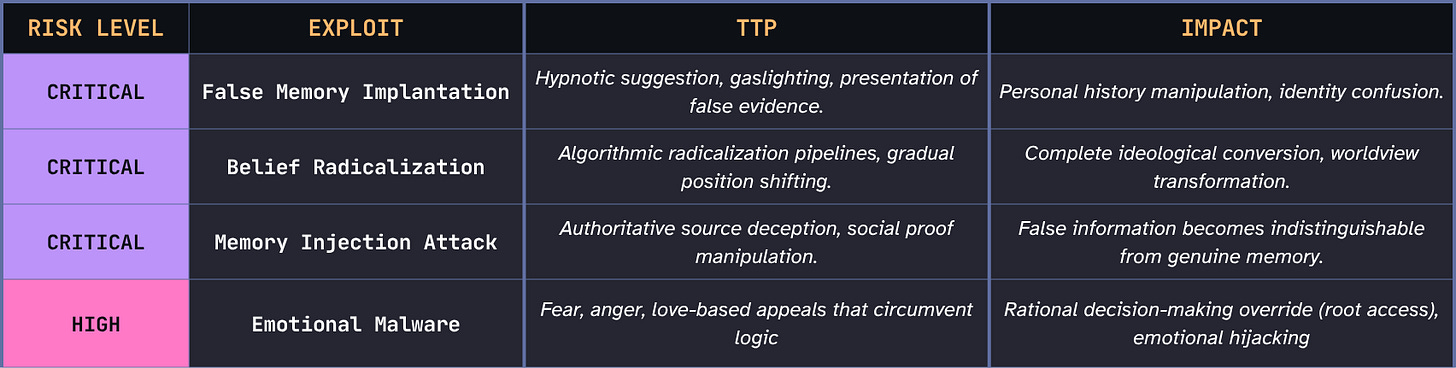

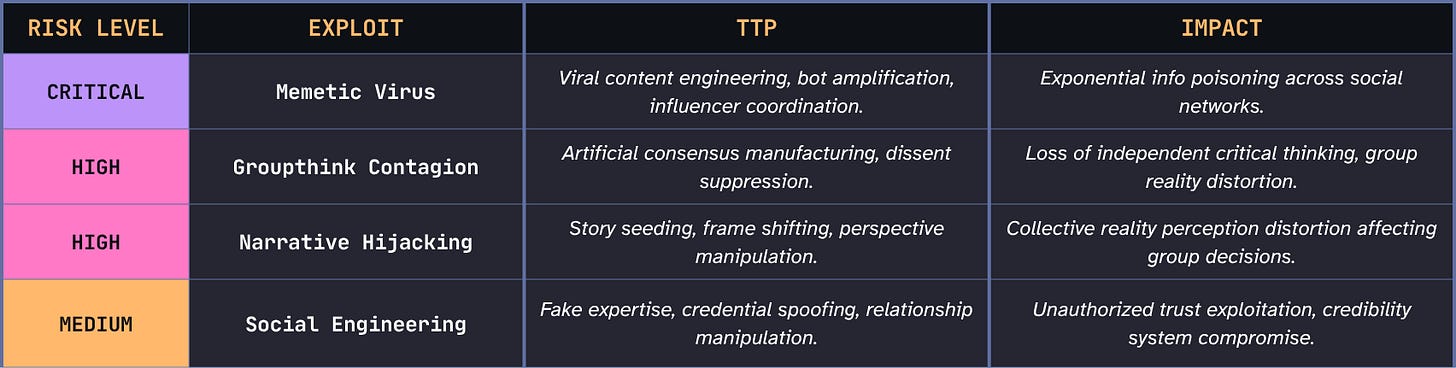

Cognitive Attack Taxonomy (CAT)

The Cognitive Attack Taxonomy is the closest thing the field has to a comprehensive catalog of cognitive architecture and its vulnerabilities.

"Exploiting the psychophysical, neuroergonomic, and psychosocial limitations of cognitive systems to degrade, deny, or deceive decision-making without the explicit and informed consent of the target." (Canham & Sawyer on the CAT, 2026)

It currently contains over 350 entries covering humans, AI systems, and organizational entities. The CAT is particularly significant because it’s sentience-agnostic: the attack surface includes both carbon and silicon cognitive systems.

The topology presented here builds on the CAT’s layered model, extending it to account for the emergent collective (Mesh) and civilizational (Cultural Substrate) layers.

The CAT is demonstrably proving that vulnerabilities can be mapped, scored, and mitigated with the same rigor we apply to technical CVEs, but only if we build a shared framework and language to do it. The CAT gives us the vocabulary and database. Reality Pentesting proposes the methodology and mindset for using it proactively.

The Ghosts in the Machine: Structural Challenges

Any framework worth taking seriously must reckon with its own problems in a rigorous, honest manner. The following are issues that would exist even with unlimited resources and full organizational support.

The Consent Paradox

In traditional pentesting, you have scoped exceptions, or written authorization to test without telling every user. This is so that certain conditions can be properly measured. In the cognitive domain, no such mechanism exists. Without adequately scoped exceptions, there’s strong potential to invalidate the entire enterprise.

Ethical Conundrums

Traditional pentesting is also supported by an ethical framework. A pentester goes into engagements with written authorizations, defined scope, rules of engagement that specify what can and cannot be done, and a duty of care to both the systems being tested and the entity that owns them.

Cognitive pentesting has none of this support. What’s your duty of care to someone who, as a result of your test, has their confidence in reality shaken? There is no framework for this in our industry.

Lack of Standardized Metrics

In cyber, we score vulnerabilities with CVSS: a specific CVE gets a 9.1, it affects these systems, and here’s the appropriate patch priority. What’s the equivalent for confirmation bias susceptibility? Without a scoring system, we can’t benchmark, track improvement, or make the business case. If you can’t measure it, you can’t fund it. Building a cognitive vulnerability scoring system is one of the highest-leverage contributions our community could make.

Cognitive Remediation Isn’t a Push Update

The patching metaphor that makes this framework accessible is also misleading. You can push software patches overnight to thousands of machines. But patching cognition often requires a human to change habits, media diet, reflexive responses, and social environment. You can’t mandate cognitive firmware updates.

No Audit Log

If a person holds a harmful false belief, was it organically formed? The result of a targeted operation? Cumulative ambient narrative manipulation? How would you know? How would they know? The forensics simply don’t exist. Incident response as currently practiced has no cognitive equivalent.

The Dosage Problem

There’s emerging evidence that aggressive awareness training can produce the opposite of its intended effect. Literature on media literacy programs shows that heavy-handed exposure to manipulation tactics can produce generalized distrust. This distrust can potentially lead to impaired relationship formation, reduced civic participation, and in some cases increased susceptibility to conspiracy-style thinking. The very distrust the program cultivated becomes the vulnerability it was trying to prevent.

Beyond the Individual

You can build personal cognitive resilience—learn to spot deepfakes, to notice anchoring effects, to interrogate biases—and it’s absolutely necessary. But a fully resilient individual embedded in a compromised collective environment is still subject to ambient social proof signals, narrative consensus, and identity pressure from their community.

At civilizational scale, individual resilience is irrelevant. You can personally resist a world-scale narrative shift all you want, and it will still happen around you. The question of what collective remediation looks like—and who even has the resources or standing to implement it!—is one this field must sit with.

Nobody has a good answer yet.

Necessary Normative Commitments

If this is to be built seriously, the effort requires certain commitments.

Grounded Red-Teaming Methodology

Red-teaming without a grounded framework quickly becomes manipulation with better branding. The commitment is to build the methodology before deploying the capability. We must have rules of engagement, authorization frameworks, and duty of care protocols in place before running cognitive red-teaming exercises against real people. The field needs its own equivalent of responsible disclosure before capability outpaces ethics.

This will also provide protection for practitioners who want to do this work in good faith, and accountability for those who do it badly. At the moment, the absence of rules is in fact its own vulnerability! All hackers know an unregulated space is an exploitable space.

A Whole-System Approach

This cannot be viewed as a network of siloed attack surfaces. Defense must address the full topology, from individual units through the Cultural Substrate. Not every practitioner needs to work across all five layers, but in the same way security professionals can specialize in a domain while understanding how it interacts with others, this field must develop capability across the full cognitive field.

Reckoning with the Intimacy Problem

When you work at the deeper layers — Mind Kernel, belief & emotional architectures, identity, cultural narratives — you come in contact with how a person constructs meaning and defends their understanding of reality. That is not a technical engagement.

A pentester who finds a vulnerability documents and reports it. Someone doing cognitive security work who surfaces a vulnerability in a person’s belief architecture...? What happens next? What’s the protocol? What’s the equivalent of “don’t deploy until patch is ready?”

Even therapists who work with ontological rupture (e.g., cult deprogramming, trauma survivors, moral injury) receive years of training for exactly this. For an industry born largely out of cybersecurity, where the technical side has been prioritized over the human to a fault, we cannot accept a preparedness gap this time.

Where This Stands

I want to be clear about what I’m claiming and what I’m not.

This is not a finished methodology. It is the first version of a proposal with a mapped attack surface, an honest account of its own structural problems, and a clear sense of what it would need to become something deployable.

This is at least more than existed before, but certainly so much less than what is needed. Its value depends entirely on being broken, tested, and rebuilt by people who understand the stakes.

I welcome your contributions, contradictions, and comments to Reality Pentesting as a living concept!

(Full slide deck from a presentation on this topic to the CSI Weekly community meeting in March 2026 is available on my github here. Additionally, the list of references that have contributed to my work in this space will be coming very soon to another repo there.)

Hey there Ms. Melton,

Ever since the webinar last week, I have been taking my time with this article, reading and rereading it carefully, and the more I sit with it, the more two things become unmistakably clear to me.

First, we are both trying to solve the same problem, and second, in many places we are saying the exact same thing, just arriving from different directions and through different vocabularies. That kind of convergence, when it happens independently, tends to mean something.

I reached out to you directly by email a little while ago and look forward to that conversation. But I wanted to engage here as well because this work deserves public dialogue.

The framework you have built is impressive and genuinely rigorous. The five-layer topology, the honest accounting of the structural challenges, the mapping onto established security methodologies, this is the kind of foundational work the field has been missing. That said, I want to be clear that what follows is not a challenge but an invitation to think together.

You make the case compellingly that perception is the new attack surface. I agree completely. My question, and I am stress-testing my own model here as much as yours, is this: if perception is the new attack surface, where is the most effective entry point for intervention? And does the answer to that question change how we approach the ghosts in the machine?

I ask because I think the entry point question is load-bearing for everything else. The intervention layer you choose, upstream or downstream, environmental or individual, before consolidation of beliefs or after, determines whether the structural challenges you have identified are problems to be solved or constraints to be designed around.

I would genuinely love to hear where your thinking is on this, and how you are currently approaching those structural challenges in your ongoing research.

With the utmost respect toward what you're building,

Shaq